Advanced Record Analysis – 2392528000, кфефензу, 8337665238, 18003465538, 665440387

Advanced Record Analysis probes a mix of numeric and non-Latin identifiers as probabilistic signals. It emphasizes transparent inference, robust feature extraction, and cross-source validation. The identifiers—2392528000, 8337665238, 18003465538, 665440387, and кфефензу—are treated as data points with potential lineage and uncertainty. The approach combines cleaning, pattern recognition, and auditable workflows to reveal latent structures without overcommitting to one interpretation. This groundwork sets the stage for principled insights and practical implications.

What Advanced Record Analysis Is and Why It Matters

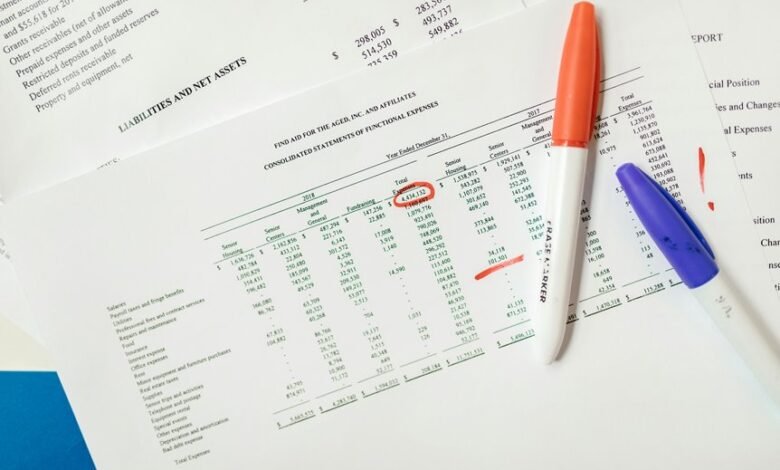

Advanced Record Analysis refers to a systematic approach for evaluating and interpreting complex data records to extract reliable insights. It frames uncertainty probabilistically, benchmarking evidence, and updating conclusions as new signals emerge.

This method underpins data governance by establishing standards, accountability, and stewardship. It also clarifies data lineage, revealing origin, transformations, and custodianship to support transparent, freedom-friendly decision-making.

Decoding Key Identifiers: 2392528000, 8337665238, 18003465538, 665440387

Decoding Key Identifiers: 2392528000, 8337665238, 18003465538, 665440387 involves evaluating how these numeric strings function as signals within data records.

The analysis remains analytical and probabilistic, framing identifiers as probabilistic cues rather than fixed truths.

It emphasizes data integrity and anomaly detection, highlighting how pattern deviations reveal inconsistencies while preserving systemic freedom and interpretive agency for researchers.

Pattern Recognition and Cross-Referencing Techniques for Complex Datasets

Pattern recognition in complex datasets relies on robust feature extraction, probabilistic modeling, and rigorous cross-referencing across heterogeneous data sources. This approach quantifies uncertainty, links disparate signals, and reveals latent structure through principled inference. Cross referencing integrates records, timestamps, and identifiers, enabling resilient pattern recognition. Analysts evaluate model assumptions, balance bias-variance, and communicate results with transparency, fostering freedom through interpretable, evidence-driven conclusions about data relationships.

Practical Frameworks: From Data Cleaning to Actionable Insights

How can data be transformed from noisy inputs into reliable, decision-ready outputs? Practical frameworks translate raw streams into actionable insights through disciplined cleaning, governance, and tooling. They balance uncertainty with probabilistic reasoning, emphasizing anomaly detection, cross field mapping, and transparent data lineage. This approach preserves freedom by enabling informed choices while maintaining accountability and auditable, repeatable processes across complex environments.

Frequently Asked Questions

What Is the Origin of the Numeric Identifiers 2392528000 and 665440387?

The origin is unconfirmed; origins appear stochastic, with Numerical provenance suggesting algorithmic assignment rather than historical lineage. Origin origin remains uncertain, yet probabilistic indicators imply systemic generation. The analytical view supports freedom in interpreting numerical provenance.

How Do These Codes Relate to Real-World Records or Entities?

The codes likely map to real-world entities through data provenance and metadata schemas, guiding probabilistic linkage across records. They indicate relational cohorts, not definitive identifiers, emphasizing traceable origins, uncertainty, and interoperable data provenance for transparent metadata schemas.

Can These Numbers Indicate Temporal or Geographic Patterns?

Temporal patterns and geographic patterns may emerge, says the analysis, as probabilistic signals suggest sustained sequences and regional clustering, allowing the observer to infer likelihoods while maintaining a detached, freedom-seeking, data-driven perspective.

Which Privacy Considerations Arise When Analyzing Such Identifiers?

Privacy concerns arise from analyzing such identifiers, as patterns may reveal sensitive traits unless proper controls exist. Data minimization, provenance, anonymization, consent management, and careful data sharing underpin trustworthy practices and mitigate re-identification risks in probabilistic assessments.

How Can End-Users Validate the Accuracy of Decoded Results?

End user validation can verify decoding accuracy by cross-checking decoded results against independent data sources, reproducible methods, and confidence estimates; practitioners should document uncertainties, apply probabilistic reasoning, and encourage transparent, user-driven verification processes.

Conclusion

In a field where certainty is asymptotic, clarity emerges from paradox: noise aligned with structure yields insight, and structure masked as noise invites scrutiny. Advanced Record Analysis juxtaposes cryptic identifiers with transparent governance, revealing latent patterns without sacrificing interpretability. By balancing probabilistic inference with auditable processes, the method converts ambiguity into actionable signals, while maintaining accountability. Thus, precision and ambiguity coexist, guiding decision-makers through complex datasets with disciplined skepticism and principled transparency.